Final April, a marketing campaign advert appeared on the Republican Nationwide Committee’s YouTube channel. The advert confirmed a sequence of photographs: President Joe Biden celebrating his reelection, U.S. metropolis streets with shuttered banks and riot police, and immigrants surging throughout the U.S.-Mexico border. The video’s caption learn: “An AI-generated look into the nation’s potential future if Joe Biden is re-elected in 2024.”

Whereas that advert was up entrance about its use of AI, most faked pictures and movies are usually not: That very same month, a faux

video clip circulated on social media that purported to indicate Hillary Clinton endorsing the Republican presidential candidate Ron DeSantis. The extraordinary rise of generative AI in the previous few years implies that the 2024 U.S. election marketing campaign gained’t simply pit one candidate in opposition to one other—it is going to even be a contest of fact versus lies. And the U.S. election is much from the one high-stakes electoral contest this yr. In line with the Integrity Institute, a nonprofit targeted on enhancing social media, 78 nations are holding main elections in 2024.

Happily, many individuals have been getting ready for this second. One among them is

Andrew Jenks, director of media provenance tasks at Microsoft. Artificial photographs and movies, additionally known as deepfakes, are “going to have an effect” within the 2024 U.S. presidential election, he says. “Our aim is to mitigate that affect as a lot as potential.” Jenks is chair of the Coalition for Content material Provenance and Authenticity (C2PA), a corporation that’s growing technical strategies to doc the origin and historical past of digital-media information, each actual and faux. In November, Microsoft additionally launched an initiative to assist political campaigns use content material credentials.

The C2PA group brings collectively the Adobe-led

Content material Authenticity Initiative and a media provenance effort known as Mission Origin; in 2021 it launched its preliminary requirements for attaching cryptographically safe metadata to picture and video information. In its system, any alteration of the file is robotically mirrored within the metadata, breaking the cryptographic seal and making evident any tampering. If the individual altering the file makes use of a device that helps content material credentialing, details about the modifications is added to the manifest that travels with the picture.

Since releasing the requirements, the group has been additional growing the open-source specs and implementing them with main media firms—the BBC, the Canadian Broadcasting Corp. (CBC), and

The New York Instances are all C2PA members. For the media firms, content material credentials are a approach to construct belief at a time when rampant misinformation makes it straightforward for individuals to cry “faux” on something they disagree with (a phenomenon generally known as the liar’s dividend). “Having your content material be a beacon shining by the murk is admittedly vital,” says Laura Ellis, the BBC’s head of expertise forecasting.

This yr, deployment of content material credentials will start in earnest, spurred by new AI laws

in america and elsewhere. “I believe 2024 would be the first time my grandmother runs into content material credentials,” says Jenks.

Why do we’d like content material credentials?

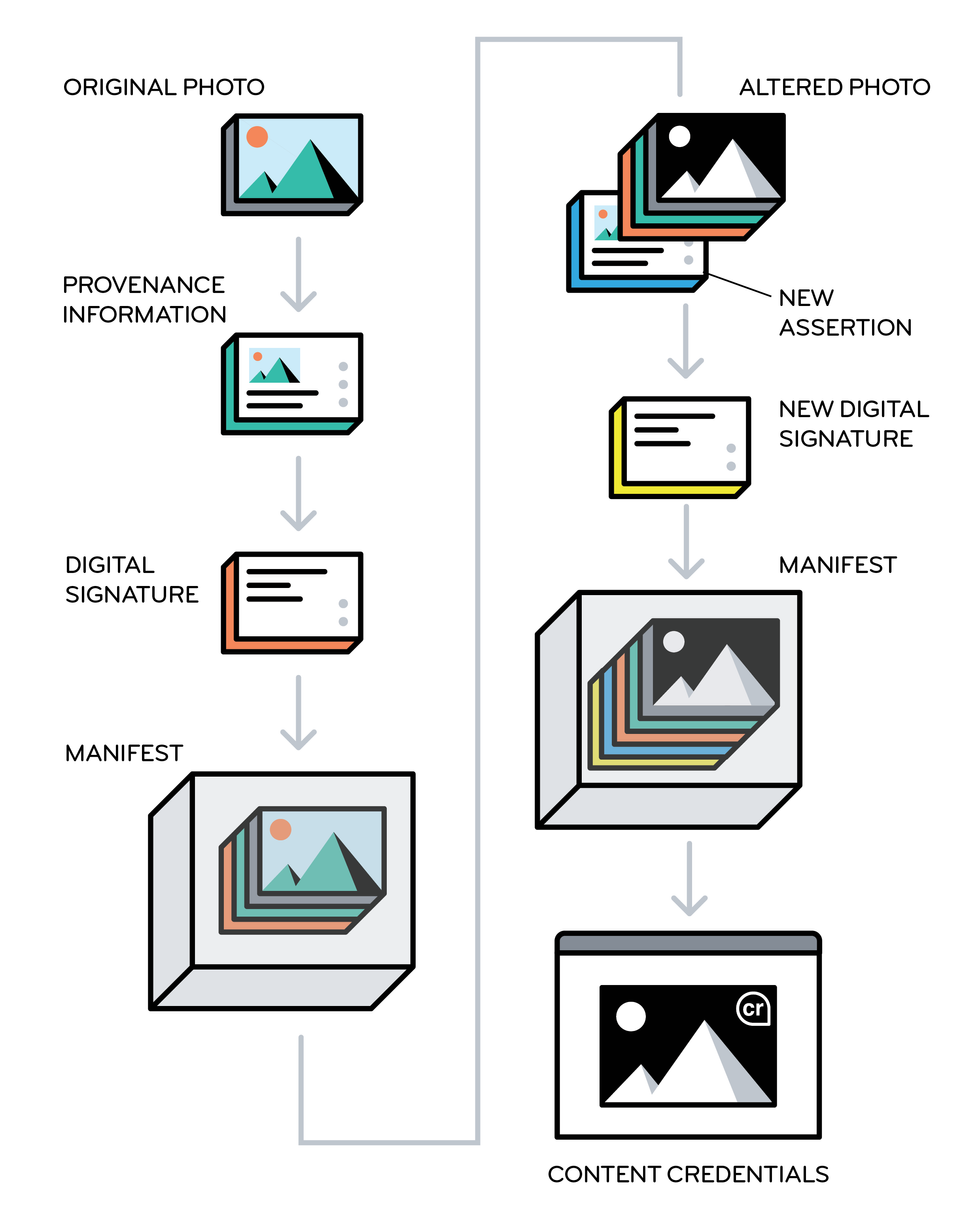

Within the content-credentials system, an unique picture is supplemented with provenance data and a digital signature which can be bundled collectively in a tamper-evident manifest. If one other person alters the picture utilizing an permitted device, new assertions are added to the manifest. When the picture exhibits up on a Internet web page, viewers can click on the content-credentials emblem for details about how the picture was created and altered. C2PA

Within the content-credentials system, an unique picture is supplemented with provenance data and a digital signature which can be bundled collectively in a tamper-evident manifest. If one other person alters the picture utilizing an permitted device, new assertions are added to the manifest. When the picture exhibits up on a Internet web page, viewers can click on the content-credentials emblem for details about how the picture was created and altered. C2PA

The crux of the issue is that image-generating instruments like

DALL-E 2 and Midjourney make it straightforward for anybody to create realistic-but-fake pictures of occasions that by no means occurred, and related instruments exist for video. Whereas the most important generative-AI platforms have protocols to forestall individuals from creating faux pictures or movies of actual individuals, equivalent to politicians, loads of hackers enjoyment of “jailbreaking” these programs and discovering methods across the security checks. And fewer-reputable platforms have fewer safeguards.

Towards this backdrop, a couple of huge media organizations are making a push to make use of the C2PA’s content material credentials system to permit Web customers to test the manifests that accompany validated photographs and movies. Photographs which have been authenticated by the C2PA system can embrace a little bit

“cr” icon within the nook; customers can click on on it to see no matter data is offered for that picture—when and the way the picture was created, who first printed it, what instruments they used to change it, the way it was altered, and so forth. Nevertheless, viewers will see that data provided that they’re utilizing a social-media platform or utility that may learn and show content-credential information.

The identical system can be utilized by AI firms that make image- and video-generating instruments; in that case, the artificial media that’s been created could be labeled as such. Some firms are already on board:

Adobe, a cofounder of C2PA, generates the related metadata for each picture that’s created with its image-generating device, Firefly, and Microsoft does the identical with its Bing Picture Creator.

“Having your content material be a beacon shining by the murk is admittedly vital.” — Laura Ellis, BBC

The transfer towards content material credentials comes as enthusiasm fades for automated deepfake-detection programs. In line with the BBC’s Ellis, “we determined that deepfake-detection was a war-game house”—that means that one of the best present detector may very well be used to coach a good higher deepfake generator. The detectors additionally aren’t excellent. In 2020, Meta’s

Deepfake Detection Problem awarded high prize to a system that had solely 65 p.c accuracy in distinguishing between actual and faux.

Whereas only some firms are integrating content material credentials to this point, laws are at present being crafted that may encourage the apply. The European Union’s

AI Act, now being finalized, requires that artificial content material be labeled. And in america, the White Home lately issued an govt order on AI that requires the Commerce Division to develop tips for each content material authentication and labeling of artificial content material.

Bruce MacCormack, chair of Mission Origin and a member of the C2PA steering committee, says the massive AI firms began down the trail towards content material credentials in mid-2023, after they signed voluntary commitments with the White Home that included a pledge to watermark artificial content material. “All of them agreed to do one thing,” he notes. “They didn’t conform to do the identical factor. The manager order is the driving operate to drive everyone into the identical house.”

What’s going to occur with content material credentials in 2024

Some individuals liken content material credentials to a vitamin label: Is that this junk media or one thing made with actual, healthful substances?

Tessa Sproule, the CBC’s director of metadata and data programs, says she thinks of it as a series of custody that’s used to trace proof in authorized circumstances: “It’s safe data that may develop by the content material life cycle of a nonetheless picture,” she says. “You stamp it on the enter, after which as we manipulate the picture by cropping in Photoshop, that data can also be tracked.”

Sproule says her crew has been overhauling inside image-management programs and designing the person expertise with layers of data that customers can dig into, relying on their degree of curiosity. She hopes to debut, by mid-2024, a content-credentialing system that might be seen to any exterior viewer utilizing a kind of software program that acknowledges the metadata. Sproule says her crew additionally needs to return into their archives and add metadata to these information.

On the BBC, Ellis says they’ve already performed trials of including content-credential metadata to nonetheless photographs, however “the place we’d like this to work is on the [social media] platforms.” In any case, it’s much less possible that viewers will doubt the authenticity of a photograph on the BBC web site than in the event that they encounter the identical picture on Fb. The BBC and its companions have additionally been operating workshops with media organizations to speak about integrating content-credentialing programs. Recognizing that it might be laborious for small publishers to adapt their workflows, Ellis’s group can also be exploring the concept of “service facilities” to which publishers may ship their photographs for validation and certification; the pictures could be returned with cryptographically hashed metadata testifying to their authenticity.

MacCormack notes that the early adopters aren’t essentially eager to start promoting their content material credentials, as a result of they don’t need Web customers to doubt any picture or video that doesn’t have the little

“cr” icon within the nook. “There must be a important mass of data that has the metadata earlier than you inform individuals to search for it,” he says.

Going past the media business, Microsoft’s new

initiative for political campaigns, known as Content material Credentials as a Service, is meant to assist candidates management their very own photographs and messages by enabling them to stamp genuine marketing campaign materials with safe metadata. A Microsoft weblog publish mentioned that the service “will launch within the spring as a personal preview” that’s obtainable without cost to political campaigns. A spokesperson mentioned that Microsoft is exploring concepts for this service, which “may ultimately grow to be a paid providing” that’s extra broadly obtainable.

The massive social-media platforms haven’t but made public their plans for utilizing and displaying content material credentials, however

Claire Leibowicz, head of AI and media integrity for the Partnership on AI, says they’ve been “very engaged” in discussions. Corporations like Meta at the moment are fascinated by the person expertise, she says, and are additionally pondering practicalities. She cites compute necessities for example: “In the event you add a watermark to each piece of content material on Fb, will that make it have a lag that makes customers log out?” Leibowicz expects laws to be the most important catalyst for content-credential adoption, and she or he’s looking forward to extra details about how Biden’s govt order might be enacted.

Even earlier than content material credentials begin displaying up in customers’ feeds, social-media platforms can use that metadata of their filtering and rating algorithms to search out reliable content material to suggest. “The worth occurs properly earlier than it turns into a consumer-facing expertise,” says Mission Origin’s MacCormack. The programs that handle data flows from publishers to social-media platforms “might be up and operating properly earlier than we begin educating customers,” he says.

If social-media platforms are the top of the image-distribution pipeline, the cameras that file photographs and movies are the start. In October, Leica unveiled the primary digicam with

built-in content material credentials; C2PA member firms Nikon and Canon have additionally made prototype cameras that incorporate credentialing. However {hardware} integration needs to be thought of “a development step,” says Microsoft’s Jenks. “In one of the best case, you begin on the lens once you seize one thing, and you’ve got this digital chain of belief that extends all the best way to the place one thing is consumed on a Internet web page,” he says. “However there’s nonetheless worth in simply doing that final mile.”

This text seems within the January 2024 print problem as “This Election Yr, Search for Content material Credentials.”